Qualia is the research agent that ideates, runs experiments, and collaborates with you.

from lm_lab import TransformerLM, Trainer from lm_lab.data import WikiText103 import matplotlib.pyplot as plt

model = TransformerLM(

n_layers=12, n_heads=12, d_model=768,

max_seq_len=1024, dropout=0.1,

).cuda()

print(f"Parameters: {model.num_parameters():,}")Parameters: 124,439,808

trainer = Trainer(

model,

dataset=WikiText103(),

lr=6e-4, weight_decay=0.1,

scheduler="cosine", min_lr=6e-5,

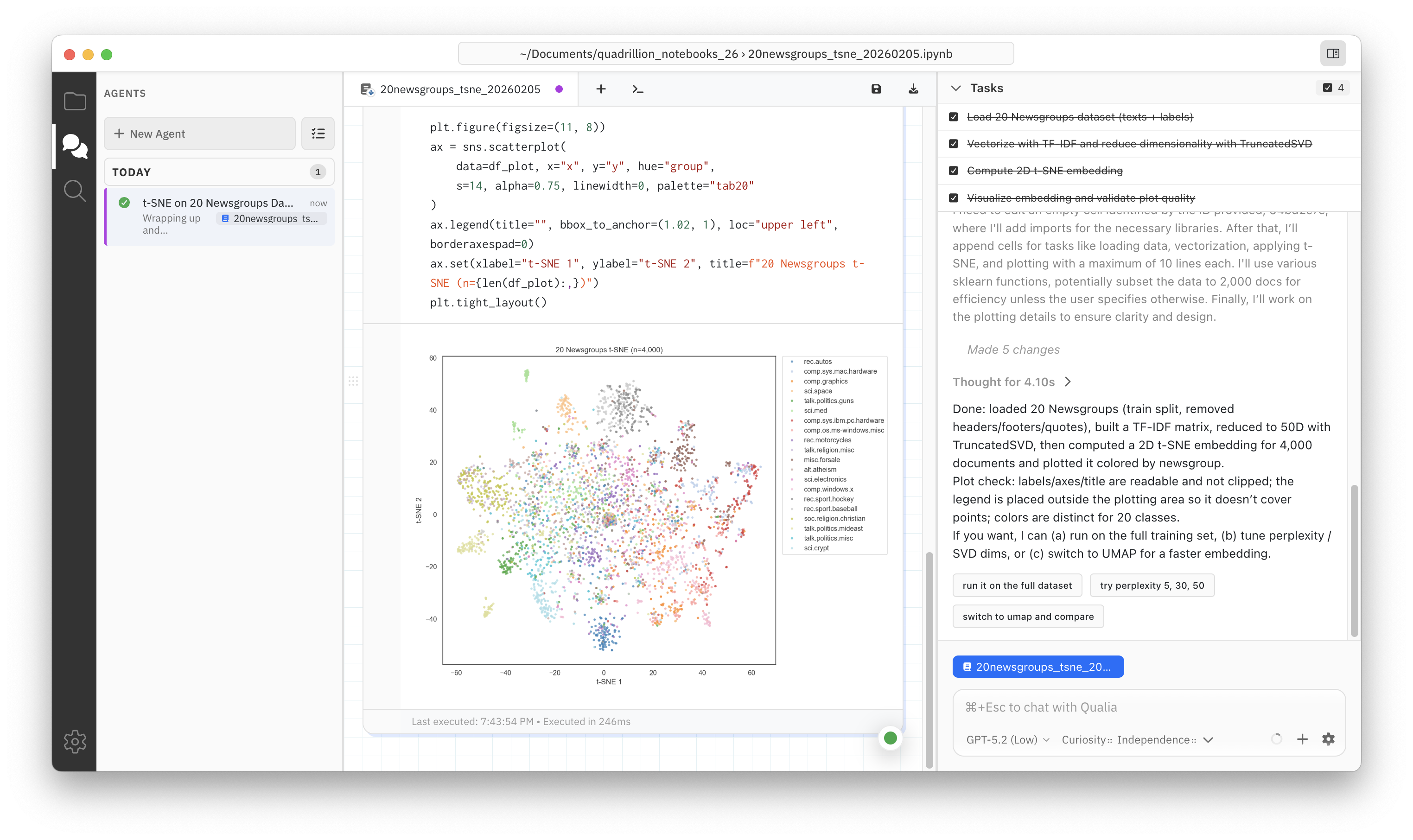

)Qualia runs wherever Jupyter does. Chat with it to clean data, make beautiful visualizations, and fit models—just by asking.

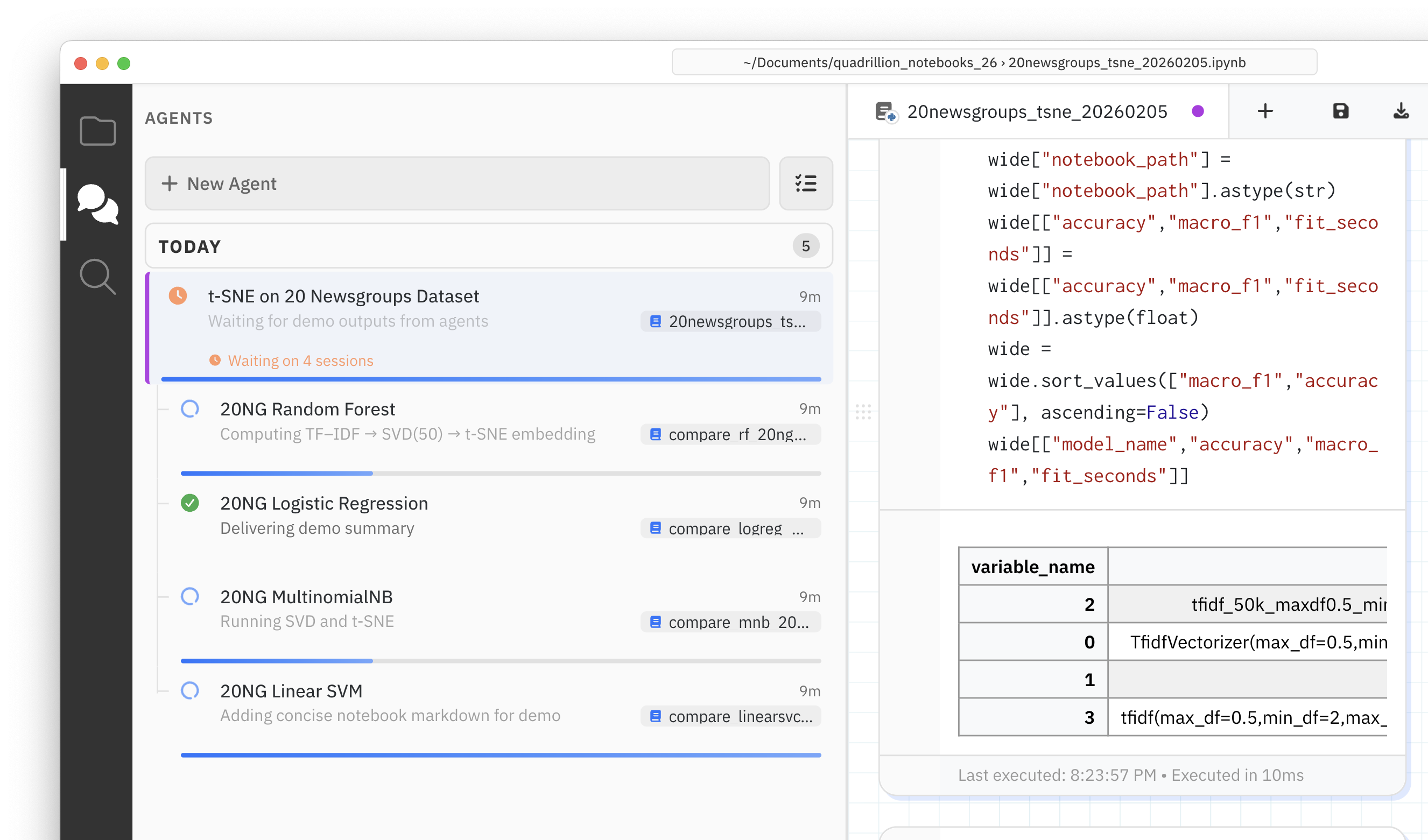

Never leave an idea on the table again. Qualia coordinates parallel agents so you can iterate on promising model variants and re-examine that featurization choice you made last week.

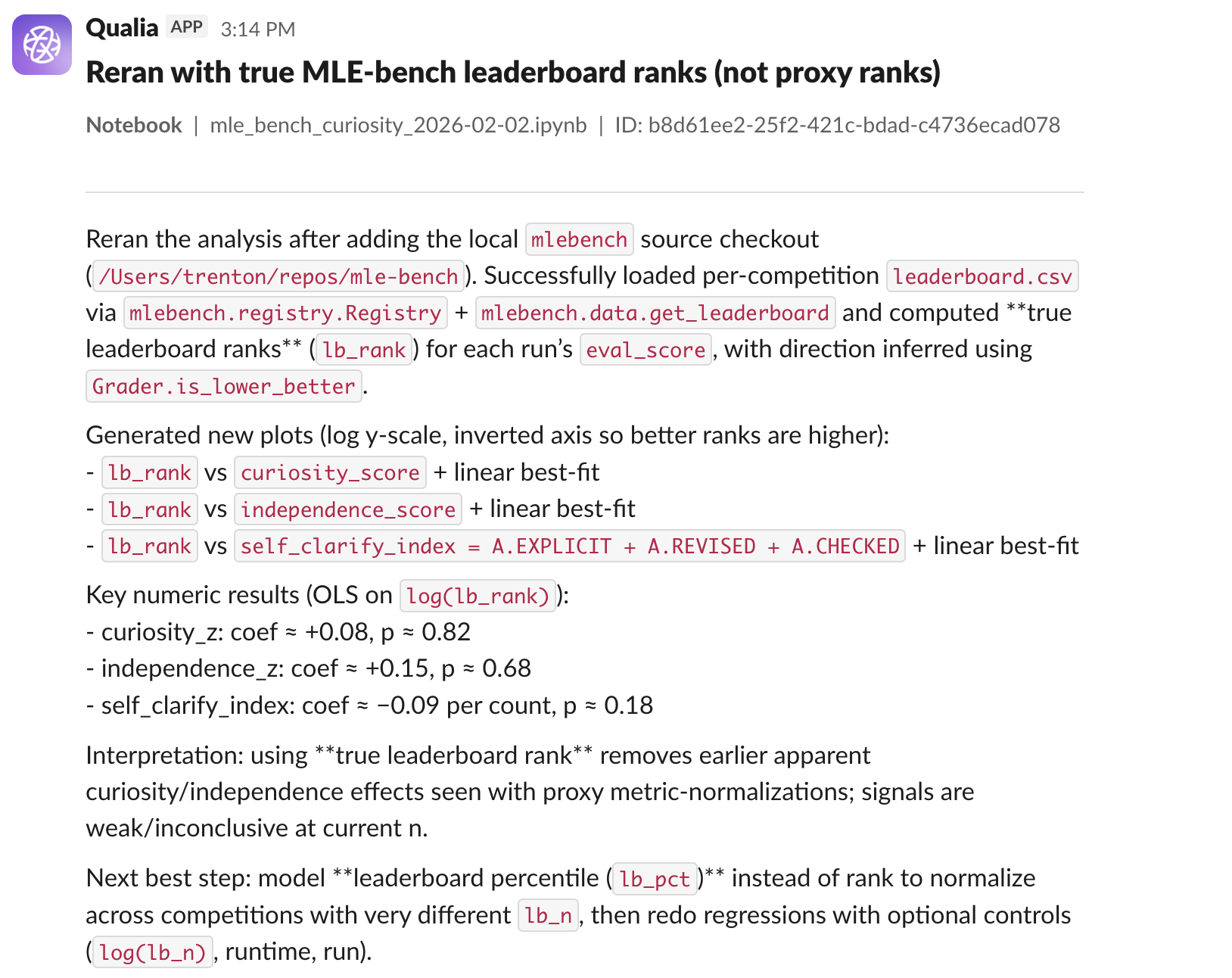

Propose a direction in Slack, and Qualia runs for hours—training models, testing variations, and reporting what works.

“This feels like a superpower.”

Explore Qualia

For researchers

Everything in Free, plus:

For power users

Everything in Pro, plus:

For organizations with custom needs